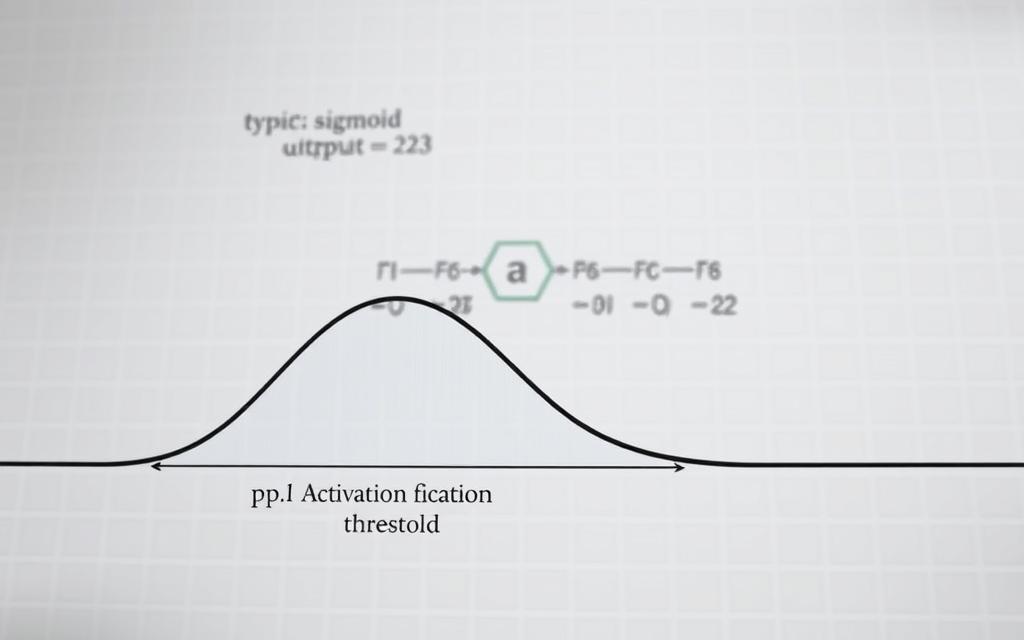

At the heart of every neural network lies a critical component: the activation function. These mathematical gatekeepers decide whether a neuron should be activated, transforming weighted inputs into non-linear outputs. The same logic also shows up when you study autoencoders and how they shape neural-network behavior.

Activation functions introduce non-linearity, enabling neural networks to model intricate relationships in data. This capability is essential for tasks like image classification and natural language processing. By choosing the right activation function, developers can significantly impact a model’s accuracy and performance.

Understanding these functions is key to mastering machine learning. They bridge the gap between raw data and actionable insights, making them indispensable in modern AI applications.

That concept is easier to apply once you relate it to about neural networks in a model-building workflow.