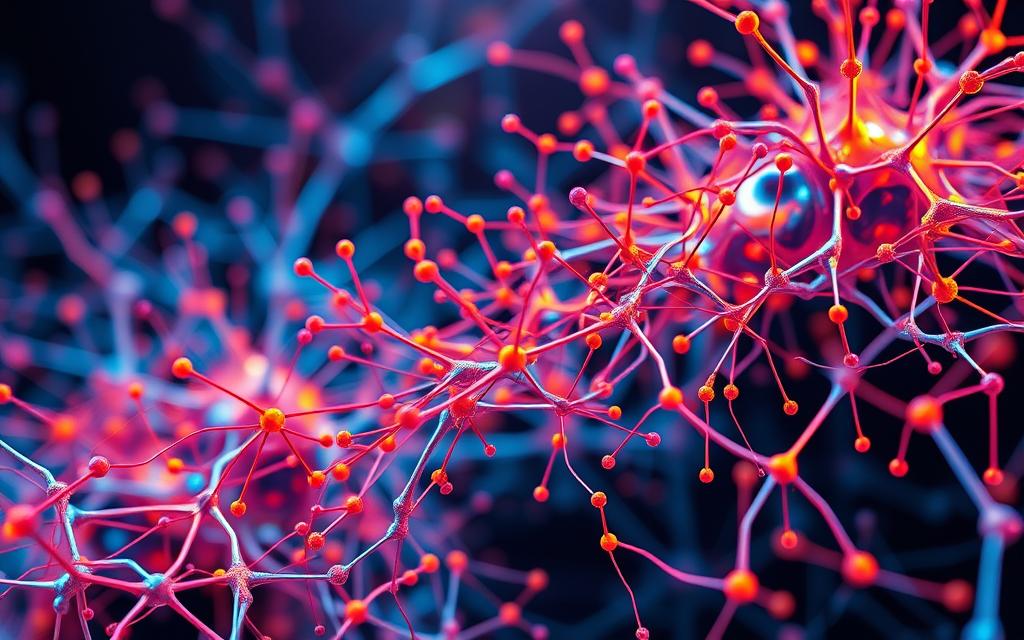

In today’s tech-driven workforce, AI literacy is no longer optional—it’s essential. At the heart of modern AI advancements lies the concept of neural networks. These systems, inspired by biological neurons, power innovations like Google’s search algorithm and IBM’s Granite AI models. That foundation is easier to connect with deep learning models like BERT.

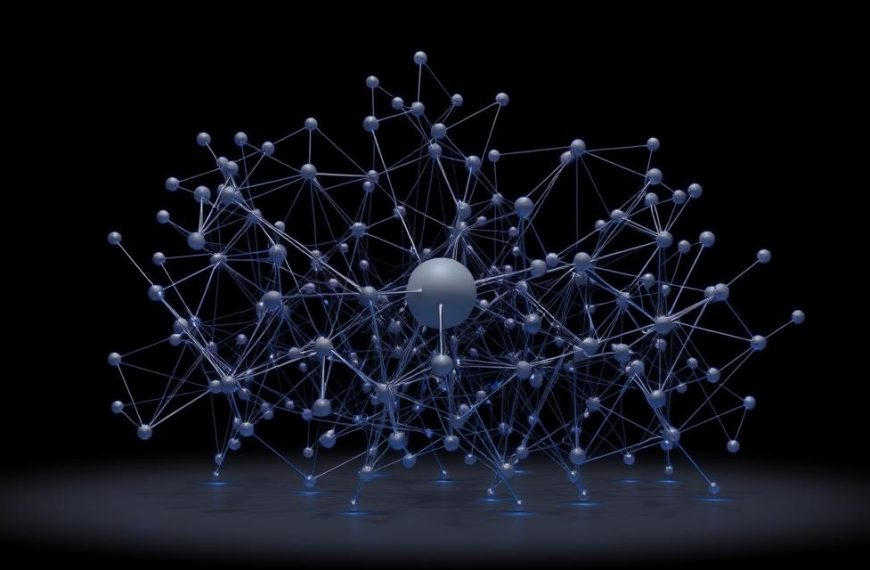

Mastering this field requires a blend of theory and practice. Structured learning paths, such as Andrew Ng’s Deep Learning Specialization, provide a solid foundation. Hands-on projects, like coding with TensorFlow or Keras, reinforce conceptual knowledge.

From their inception over 80 years ago to their current applications, neural networks have evolved significantly. Understanding both their biological inspiration and artificial implementation is key to unlocking their potential.

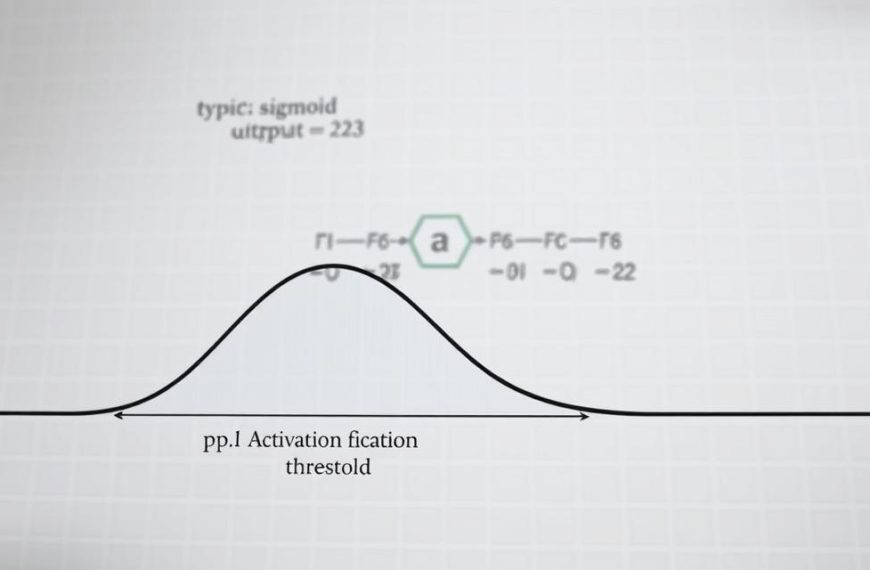

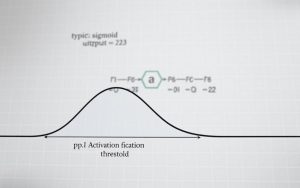

That concept is easier to apply once you relate it to about neural networks are actually in a model-building workflow.