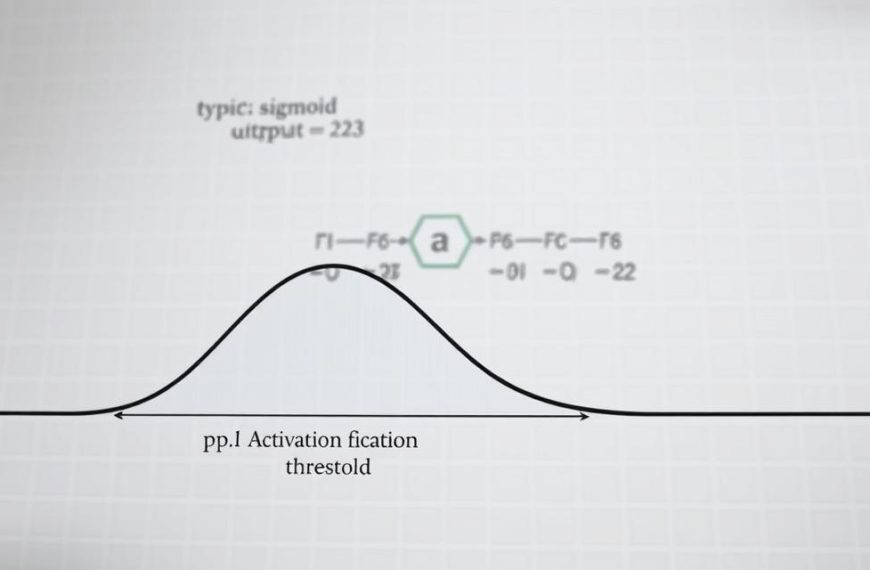

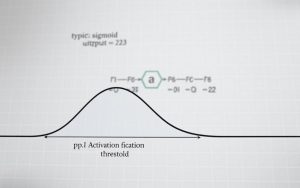

Understanding the fundamentals of neural networks is essential in today’s tech-driven world. These models power everything from image recognition to complex decision-making systems. By learning to build neural structures, you gain insights into their inner workings and applications. A clear activation function is one of the first things you need to get right.

Key components like layers, activation functions, and propagation mechanisms form the backbone of any network. Frameworks like PyTorch simplify the process, offering tools like nn.Module for creating complex architectures. For instance, the FashionMNIST dataset uses 28×28 image inputs with 512-node hidden layers for classification tasks.

Real-world applications, such as solving the XOR gate problem with NumPy, demonstrate the versatility of these models. Whether you’re using PyTorch or coding from scratch, a solid grasp of Python and linear algebra is crucial. This guide will walk you through both approaches, equipping you with the skills to tackle diverse challenges.

That concept is easier to apply once you relate it to a neural network in a model-building workflow.